Your News Aggregator API works perfectly. You can show it to a friend, if they sit next to you. You can demo it to a recruiter, if you schedule a call and hope your WiFi holds. You can handle user traffic, if users only visit when your laptop is awake. Production-ready code trapped in a local environment isn't production-ready at all.

Local vs. Production Environments

Running code locally and running it in production are fundamentally different challenges. Local development means one user, predictable load, direct access to logs, and manual restarts when things break. Production means thousands of concurrent users, unpredictable traffic spikes, servers in data centers you'll never see, and systems that must recover automatically when failures occur.

Your laptop isn't a server

Your laptop goes to sleep when you close the lid. It has no redundancy when the hard drive fails. It has one public IP address that changes when you switch networks. It can't handle 1,000 requests per second. Professional applications run on infrastructure designed for reliability, scalability, and availability. That infrastructure lives in the cloud.

Production requires supporting services

Your News API needs a database. In production, that database needs automated backups, failover to standby instances when the primary fails, and the ability to scale storage without downtime. Your API needs SSL certificates for HTTPS. It needs load balancing so traffic distributes across multiple containers. It needs monitoring to detect problems before users notice them. These supporting services require infrastructure expertise.

Manual deployment doesn't scale

SSH into a server, copy files, restart services, and pray everything works. That process breaks down with multiple servers, multiple deployments per day, and team members who need to deploy safely. Professional applications run on cloud infrastructure designed for reliability and scale: managed databases, serverless containers, load balancers, and centralized logging. This chapter builds that production infrastructure.

The Solution: AWS

Chapter 27 solved one problem: containerization. Your application runs identically on any machine with Docker installed. One command starts your entire stack: FastAPI, PostgreSQL, Redis. No more "works on my machine" debugging. No more missing dependencies. The containers work perfectly, but they only run where you run them.

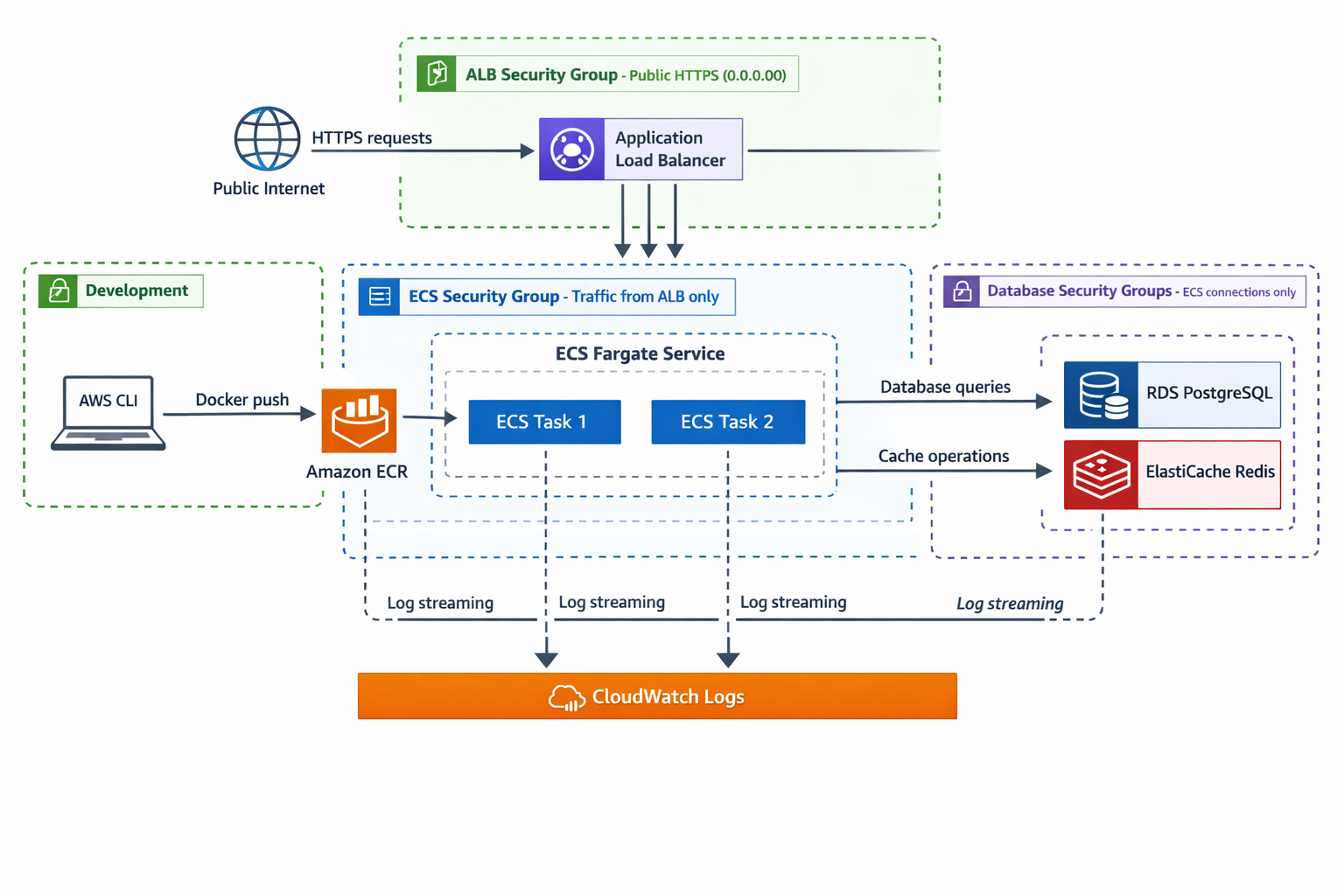

This chapter solves the deployment problem: You'll deploy your containerized News API to AWS with professional infrastructure: serverless containers on ECS Fargate, managed databases with RDS PostgreSQL and ElastiCache Redis, and public HTTPS access through an Application Load Balancer. You'll learn to view container logs in CloudWatch for troubleshooting. This isn't a proof-of-concept deployment like Railway in Chapter 26. This is production-grade infrastructure demonstrating senior-level skills.

AWS (Amazon Web Services) is the world's largest cloud computing platform, providing over 200 services for running applications in the cloud. Instead of buying and maintaining your own servers, you rent computing resources from AWS—servers, storage, databases, networking—and pay only for what you use. This chapter uses AWS because it's the industry standard: learning AWS means learning infrastructure patterns that transfer to any cloud platform and any scale of application.

You'll use six core AWS services in this chapter: ECR (stores Docker images), ECS Fargate (runs containers), RDS (managed PostgreSQL), ElastiCache (managed Redis), ALB (load balancing), and CloudWatch (logging). Section 2 explains cloud computing fundamentals and why AWS is the right choice for learning production infrastructure. For now, just know that AWS provides the infrastructure layer that makes your containers accessible globally.

By the end, you'll have a live API at a public URL, complete with automatic documentation. You'll understand how to deploy containerized applications to production, configure managed databases, set up load balancing, and view application logs. When you share this with recruiters, you're showing them: "I understand how to deploy systems to the cloud using industry-standard infrastructure patterns." That's the career transformation this chapter enables.

AWS is the largest cloud provider with the deepest service catalog. Learning AWS means learning infrastructure patterns that transfer to any cloud platform. ECS Fargate teaches container orchestration concepts that apply to Kubernetes. RDS teaches managed database patterns that apply to any cloud. CloudWatch teaches observability principles that apply everywhere.

You could deploy to Railway, Heroku, or Fly.io with less configuration. But those platforms abstract away infrastructure decisions. You don't learn how load balancers work, how databases are managed in the cloud, or how to troubleshoot production issues. AWS requires more setup, but that setup is the education. You're learning the infrastructure layer that powers modern applications. These skills separate junior developers from senior engineers.

Chapter Roadmap

This chapter takes you from a containerized application running on your laptop to a fully deployed production system on AWS. You'll progress through cloud fundamentals, account security, and then build each infrastructure layer one service at a time until your News API is live at a public URL.

Cloud Foundations and AWS Account Setup

Understand cloud computing fundamentals and the six AWS services you'll use. Create a secure AWS account with IAM users, billing alerts, and MFA protection, then configure the AWS CLI on your local machine for command-line access to all services.

Container Registry with ECR

Push your News API Docker image to Amazon ECR, AWS's container registry. You'll authenticate Docker with ECR, tag images for version tracking, and establish the image pipeline that ECS pulls from during deployment.

Managed Databases: RDS and ElastiCache

Replace local PostgreSQL and Redis containers with fully managed AWS services. Create an RDS PostgreSQL instance with automated backups and an ElastiCache Redis cluster for caching, then configure security groups for least-privilege database access.

Deploying Containers with ECS Fargate

Deploy your containerized News API on ECS Fargate, AWS's serverless container platform. Create clusters, task definitions specifying CPU, memory, and environment variables, then launch an ECS service that maintains your desired container count with automatic restarts.

Load Balancer, HTTPS, and Going Live

Create an Application Load Balancer that gives your API a stable public URL. Configure target groups, health checks, and listeners to distribute traffic across containers. Test your live API at the ALB endpoint and optionally add HTTPS with SSL certificates.

What You'll Build: Production AWS Infrastructure

You're deploying the exact News API from Chapter 27 with no code changes. Containerization makes that possible. What changes is where your containers run and how they're supported. You'll build complete production infrastructure across multiple AWS services.

Container Registry (ECR)

AWS Elastic Container Registry stores every Docker image you build. You'll push your News API image to ECR, tag it properly for version tracking, and configure ECS to pull from this registry. This centralized image storage enables consistent deployments: the same image runs in development, staging, and production.

Managed Databases (RDS + ElastiCache)

Your local PostgreSQL and Redis containers become fully managed services. RDS PostgreSQL provides automated backups, point-in-time recovery, and multi-availability-zone failover. ElastiCache Redis offers managed caching with automatic failover. You'll migrate your database configuration from localhost to managed endpoints, gaining production-grade reliability without operational overhead.

Serverless Containers (ECS Fargate)

Your News API runs on ECS Fargate: a serverless container platform where you don't manage EC2 instances. You define what containers to run and how much CPU/memory they need. AWS handles the rest: provisioning servers, networking, health checks, and automatic restarts when containers fail. You'll create task definitions specifying your container configuration and ECS services maintaining your desired container count.

Load Balancer (ALB)

The Application Load Balancer provides your public HTTPS endpoint. It distributes incoming requests across multiple containers, performs health checks to remove failing containers from rotation, and handles SSL/TLS termination for HTTPS. The ALB DNS name becomes your stable public URL that never changes, even as containers start, stop, and restart behind it.

CloudWatch Logging

All container logs stream to CloudWatch Logs automatically. You'll configure ECS to send application logs to centralized log groups, then learn to view and query these logs for troubleshooting. When containers fail or APIs return errors, CloudWatch Logs show you exactly what happened. This basic logging foundation prepares you for the comprehensive monitoring you'll add in Chapter 29.

The final infrastructure connects like this: You push Docker images to ECR manually using the AWS CLI. ECS Fargate runs multiple containers pulled from ECR, configured through task definitions. Those containers connect to RDS PostgreSQL for article storage and ElastiCache Redis for caching. An Application Load Balancer distributes incoming HTTPS requests across containers. CloudWatch collects logs from all services, giving you visibility into application behavior.

This architecture handles thousands of requests per second, survives individual container failures, and provides complete visibility into system behavior through logging. It's the infrastructure pattern companies use in production. Building it yourself, from scratch, proves you understand modern cloud deployments at a professional level. In Chapter 29, you'll add automation (CI/CD), comprehensive monitoring (CloudWatch dashboards and alarms), and elastic scaling (auto-scaling policies) to transform this deployment into full production operations.

Learning Objectives

What You'll Master in This Chapter

By the end of this chapter, you'll be able to:

- Explain cloud computing fundamentals and when to use AWS versus PaaS alternatives

- Set up AWS accounts securely with IAM users, billing alerts, and MFA protection

- Push Docker images to AWS ECR and manage container registry versioning

- Deploy containerized applications using ECS Fargate with proper task definitions and service configuration

- Migrate from local databases to managed RDS PostgreSQL and ElastiCache Redis

- Configure Application Load Balancers with health checks for high availability

- Set up CloudWatch Logs to capture and view container output for troubleshooting

- Configure security groups implementing least-privilege network access control

- Test deployed APIs through load balancer URLs and verify production functionality

- Optimize AWS costs using Free Tier resources and right-sizing strategies

The Professional Payoff

Infrastructure skills separate good developers from great ones. Anyone can write code that works locally. Professional developers write code that works reliably in production, scales under load, recovers from failures automatically, and provides visibility into system behavior. Those skills require infrastructure knowledge.

This chapter teaches production deployment patterns used by companies of all sizes. The specific services change—AWS versus Azure versus Google Cloud—but the patterns remain constant: containerize applications, use managed databases, distribute traffic through load balancers, centralize logging. Learning these patterns with AWS means understanding infrastructure at a fundamental level that transfers to any cloud platform.

When you complete this chapter, you'll have a live News API accessible at a public URL. You'll understand how containers run in the cloud, how databases are managed at scale, how load balancing enables reliability, and how logging provides visibility. These skills demonstrate infrastructure competency that most developers lack. In technical interviews, you can discuss production deployments confidently because you've built one yourself. That competency opens doors to senior engineering roles where infrastructure expertise is expected.

The journey from "works on my laptop" to "deployed on AWS" is transformative. You'll understand not just how to write code, but how to deploy it professionally. That's the difference between completing tutorials and building systems that serve real users. This chapter bridges that gap.